Why the Future of Financial Wellbeing May Depend on Who Controls the Operating System

For decades, financial technology has largely been built for institutions.

Not for people.

The interfaces may have looked consumer-friendly.

The branding may have spoken about empowerment, trust, and outcomes.

But beneath the surface, much of the architecture of modern financial technology was designed around a very different objective:

Managing institutional scale, efficiency, compliance, retention, and distribution.

Now, artificial intelligence is beginning to challenge that model.

And the response from parts of the institutional SaaS market has become increasingly revealing.

Because when ordinary individuals suddenly gain access to AI-powered planning engines that help them:

- think clearly,

- model decisions,

- compare scenarios,

- interrogate assumptions,

- understand contracts,

- organise evidence,

- and explore consequences independently…

…the traditional economics of intermediation begin to shift.

The issue is no longer simply:

“Can AI do financial planning?”

The deeper question is:

“What happens when individuals no longer need institutions to think on their behalf?”

That is the real disruption.

The Institutional SaaS Model Was Built for a Different Era

The modern financial planning SaaS ecosystem emerged during a period defined by:

- information asymmetry,

- opaque products,

- complex regulation,

- limited consumer access to expertise,

- and expensive specialist software.

In that world, institutions became the navigators of complexity.

Consumers delegated.

Professionals intermediated.

Platforms industrialised the process.

Most institutional SaaS tools were therefore designed primarily to support:

- advisers,

- paraplanners,

- compliance teams,

- wealth firms,

- product distributors,

- and recurring revenue models.

Not individuals directly.

That distinction matters.

Because software naturally evolves around the incentives of the system funding it.

And historically, the commercially valuable activity was not:

helping ordinary people think independently.

It was:

supporting regulated intermediation.

The Advice Gap Was Also a Tool Gap

Only a small percentage of the population has historically had access to high-quality financial planning.

Most people:

- never reached an adviser,

- could not afford ongoing delegation,

- felt intimidated by the process,

- or disengaged entirely.

The result was a two-tier system:

- a small minority receiving structured planning support,

- and a large majority navigating increasingly complex financial systems alone.

In many ways, this was not merely an advice gap.

It was a capability gap.

And behind the capability gap sat a tooling gap.

Professional-grade planning infrastructure remained largely inaccessible to ordinary citizens.

Not because people lacked intelligence.

But because:

- the tools were expensive,

- the terminology was intimidating,

- and the systems were designed for professionals operating inside institutional workflows.

AI changes that equation.

The New Operating System: Agency-Centred Planning

The emerging generation of AI-powered planning engines is fundamentally different.

Rather than beginning with:

“How do we support institutional workflow?”

They begin with:

“How do we increase human capability?”

That distinction changes everything.

At Total Wealth Plans, our ecosystem was not designed around asset gathering or product manufacture.

It was designed around restoring human agency.

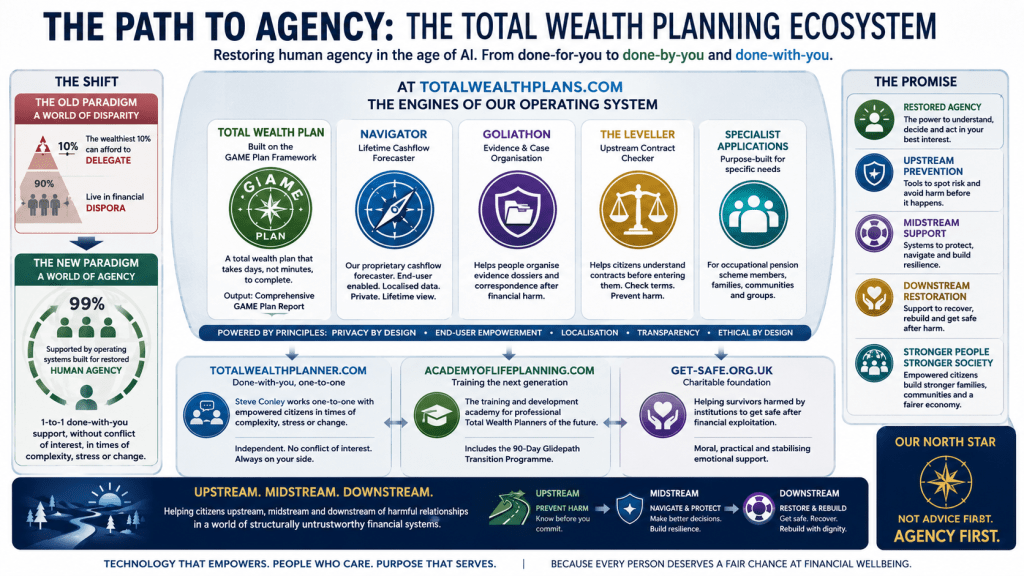

The engines of the operating system include:

- Total Wealth Plan™

- Navigator™

- Goliathon™

- The Leveller™

- Specialist Applications such as the Occupational Pension Planning Engine™

Each serves a different purpose across the financial harm lifecycle:

- upstream prevention,

- midstream navigation,

- and downstream recovery.

The goal is not dependency.

The goal is capability.

Not:

“Trust us and delegate.”

But:

“Understand more clearly before acting.”

That is a profound philosophical shift.

Why Institutional SaaS Providers Feel Threatened

Some institutional SaaS providers have criticised AI-enabled citizen planning tools using arguments such as:

- AI outputs are not fully repeatable,

- AI can hallucinate,

- tools require stronger guardrails,

- consumers may trust outputs too easily,

- and institutional-grade auditability matters.

Some of these concerns are legitimate.

AI systems absolutely require:

- careful design,

- explainability,

- validation,

- privacy safeguards,

- and thoughtful human oversight.

But there is a deeper issue hidden beneath many of these criticisms.

The real concern is often not that AI tools look bad.

It is that they look good enough.

Good enough for ordinary people to begin thinking independently.

Good enough to reduce psychological dependency.

Good enough to weaken information asymmetry.

Good enough to expose how much of traditional intermediation depended upon complexity itself.

That is the real tension.

Complexity Has Historically Been Commercially Valuable

Much of modern financial services depends economically on consumers believing:

- the system is too complex to navigate independently,

- products are too complicated to understand,

- financial futures require specialist interpretation,

- and professional intermediation is therefore essential.

Some of this is true.

But complexity also functions as a commercial moat.

Because complexity:

- protects margins,

- reinforces dependency,

- discourages challenge,

- and increases perceived expert value.

Historically, only affluent individuals could access sophisticated planning support.

Everyone else lived largely outside the planning system.

AI begins to compress that asymmetry.

And once complexity becomes computationally accessible, institutional positioning changes dramatically.

Approximately Right vs Precisely Wrong

One of the most important questions emerging in the AI era is this:

What matters more:

- being approximately right in service of human wellbeing,

or - being precisely wrong inside an auditable institutional framework?

Because history shows us that highly structured, repeatable, compliant systems can still produce deeply harmful outcomes.

Examples include:

- unsuitable pension transfers,

- opaque commission structures,

- endowment mortgage mis-selling,

- PPI,

- excessive product layering,

- and countless suitability reports built around assumptions that later failed in reality.

These systems were often:

- auditable,

- repeatable,

- compliant,

- and professionally produced.

But not necessarily aligned with the long-term interests of the individual.

This is a crucial distinction.

Auditability is not the same as wisdom.

Repeatability is not the same as alignment.

Compliance is not the same as human wellbeing.

Occupational Pension Planning and the Blind Spots of AUM Economics

Consider occupational pension planning.

For millions of people, decisions around:

- contribution levels,

- retirement timing,

- transfer choices,

- income sequencing,

- and trade-offs between security and flexibility…

…are life-defining.

Yet these areas have historically received relatively little innovation attention compared with portfolio management ecosystems.

Why?

Because many occupational pension decisions are:

- episodic,

- analytical,

- educational,

- and not directly linked to recurring AUM revenue.

The incentive structures differ.

This is why AI-native agency tools matter.

The Occupational Pension Planning Engine™ was created specifically to help individuals:

- model choices,

- compare options,

- understand consequences,

- and think clearly before acting.

Not to gather assets.

Not to maximise product sales.

But to increase capability.

That distinction is becoming increasingly important.

Trust vs Structural Trustworthiness

The financial industry frequently speaks about trust.

But public trust in financial institutions remains persistently low.

The issue may not simply be communication.

It may be structural alignment.

There is a difference between:

marketing trust

and

being structurally trustworthy.

A structurally trustworthy system:

- minimises conflicts of interest,

- reduces information asymmetry,

- supports informed consent,

- increases transparency,

- and empowers individuals to understand decisions affecting their lives.

By contrast, structurally untrustworthy systems often:

- rely on opacity,

- reward dependency,

- exploit behavioural inertia,

- monetise asymmetry,

- and frame consumer confusion as commercial opportunity.

Importantly, this does not mean every adviser or institution acts maliciously.

Most individuals inside these systems are trying to help people.

But systems optimise around incentives.

And incentives shape behaviour.

That is why the age of AI matters so profoundly.

Because AI dramatically lowers the cost of:

- questioning,

- comparing,

- modelling,

- understanding,

- and independent thought.

For the first time at scale, structural trustworthiness may become commercially viable.

The Future Is Not Anti-Professional

None of this means professionals disappear.

Far from it.

But the role changes.

The future professional may increasingly become:

- a guide,

- interpreter,

- coach,

- ethical sounding board,

- behavioural stabiliser,

- and judgement partner.

Not merely:

- a gatekeeper of information,

- operator of complex software,

- or distributor of opaque products.

The best professionals will help individuals:

- think better,

- not think less.

That is the distinction.

Before, During, and After Institutions

The future of financial wellbeing may increasingly depend on whether individuals have access to technology that supports them:

- before engaging institutions,

- during institutional relationships,

- and after harm occurs.

Before:

Can they understand contracts, assumptions, incentives, and risks?

During:

Can they model options independently and ask better questions?

After:

Can they organise evidence, rebuild clarity, and recover agency?

This is why the Total Wealth Planning ecosystem exists.

Not as a replacement for all professional support.

But as a capability infrastructure for ordinary people navigating increasingly complex systems.

The Real Question

The debate is not ultimately about whether AI is perfect.

It is not.

The real question is this:

Who should hold the primary thinking power in financial life:

institutions,

or individuals?

The old system largely assumed delegation.

The emerging system increasingly enables participation.

And that may prove to be one of the most important societal transitions of the AI era.

Because in the end, the goal is not merely better financial products.

It is restored human agency.