For decades, institutions benefited from one overwhelming structural advantage:

They held the information.

Banks held the expertise.

Governments held the systems.

Corporations held the infrastructure.

Professionals held the knowledge.

Media held the narrative.

Universities held credentialed authority.

Ordinary people largely depended on institutions because complexity exceeded individual capability.

That dependency shaped the modern world.

It shaped:

- employment,

- finance,

- education,

- healthcare,

- law,

- regulation,

- and social organisation itself.

But something historic is now happening.

Artificial intelligence is beginning to collapse information asymmetry at scale.

And that may become one of the most important structural shifts of the 21st century.

Because once individuals gain direct access to analytical capability, institutions no longer control understanding in the same way they once did.

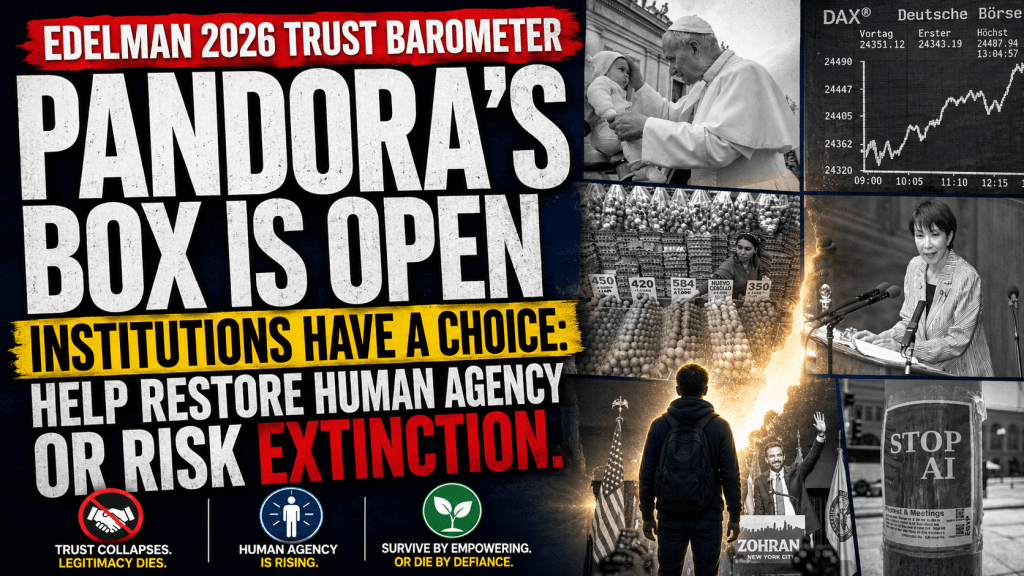

Pandora’s box is open.

And many institutions still appear not to fully grasp what that means.

The Trust Crisis Institutions Helped Create

The warning signs have been building for years.

Across politics, media, finance, healthcare, technology, and government, public trust has steadily eroded.

The 2026 Edelman Trust Barometer paints a deeply concerning picture of institutional confidence across the world.

The report identifies a growing global retreat into:

- polarization,

- grievance,

- and insularity.

Its findings are stark.

Globally, only 32% of people believe the next generation will be better off than today.

Job fears linked to recession and trade disruption are at all-time highs.

A majority of low-income individuals fear they will be left behind by AI rather than benefit from it.

And perhaps most significantly, 70% of people globally now exhibit what Edelman calls an “insular trust mindset” — a reluctance or hesitation to trust people who are different from them.

This matters because trust is not merely emotional.

Trust is infrastructural.

Modern societies function because people believe:

- systems are broadly fair,

- institutions are broadly competent,

- incentives are broadly aligned,

- and authorities broadly act in good faith.

When that belief weakens, social cohesion weakens with it.

And in many industries — particularly financial services — there is mounting evidence that distrust is not irrational paranoia.

It is often a rational response to structurally misaligned systems.

Consumers increasingly see:

- hidden incentives,

- opaque charging structures,

- conflicts of interest,

- asymmetries of knowledge,

- regulatory failures,

- excessive complexity,

- and institutional self-preservation.

The public may not always understand the technical details.

But they increasingly sense the structural reality.

Many institutions were designed primarily to preserve institutional continuity and profitability — not necessarily to maximise human agency.

That distinction matters enormously.

AI Changes the Balance of Power

Historically, institutions could maintain legitimacy partly because individuals lacked alternatives.

If you wanted:

- financial analysis,

- legal interpretation,

- data processing,

- document review,

- modelling,

- strategic thinking,

- or large-scale research,

you usually needed an institution.

Now increasingly, you need a laptop.

AI is not merely automating labour.

It is distributing cognitive capability.

A single individual with AI can now:

- analyse contracts,

- compare financial structures,

- model scenarios,

- challenge assumptions,

- organise evidence,

- create software,

- conduct research,

- generate educational content,

- and coordinate workflows

at a level that previously required teams or departments.

That changes the relationship between citizens and institutions fundamentally.

For the first time in modern history, individuals are beginning to regain the capability to interrogate systems directly.

This is the beginning of restored human agency.

And institutions should understand something clearly:

This trend is unlikely to reverse.

You cannot put cognitive decentralisation back in the bottle.

You cannot “uninvent” AI.

You cannot permanently maintain authority structures built entirely upon informational asymmetry once analytical capability becomes widely distributed.

Pandora’s box is open.

The Great Institutional Fork in the Road

This creates a profound strategic choice for institutions.

One path is resistance.

Some institutions will likely attempt to:

- increase dependency,

- preserve opacity,

- centralise control,

- restrict interoperability,

- weaponise regulation,

- or maintain old gatekeeping models.

But this approach carries enormous long-term risk.

Because institutions that are perceived as obstructing human agency may increasingly be viewed as adversarial rather than supportive.

And once trust collapses, institutional legitimacy becomes fragile.

The alternative path is adaptation.

Institutions can reposition themselves around:

- empowerment,

- transparency,

- capability-building,

- trust brokering,

- and ecosystem participation.

This may ultimately prove to be the only sustainable route.

The Edelman Trust Barometer itself increasingly points toward this direction.

The report identifies “trust brokering” as a critical emerging institutional role — helping groups understand one another, facilitating cooperation, and rebuilding trust across divisions.

Importantly, Edelman found that people increasingly expect institutions — especially employers and businesses — to actively facilitate trust-building rather than merely maximise profit.

This is a profound shift.

Because it means institutions are no longer judged solely on:

- efficiency,

- scale,

- or profitability.

They are increasingly judged on whether they contribute positively to human flourishing and societal coherence.

That changes the future role of business itself.

Financial Services: The Front Line of the Agency Revolution

This may be especially true in financial services.

Why?

Because finance sits at the centre of human dependency structures.

Money influences:

- housing,

- retirement,

- healthcare,

- education,

- employment,

- relationships,

- opportunity,

- and security itself.

Historically, consumers often had little choice but to rely heavily on financial institutions and intermediaries because the system was too complex to navigate independently.

But AI changes capability economics.

Consumers increasingly have access to:

- educational tools,

- planning systems,

- contract analysis,

- modelling engines,

- and AI-supported guidance

without necessarily relying on traditional institutional mediation.

This does not mean expertise disappears.

Far from it.

But it changes the role of expertise.

The future trusted institution may not be the one that says:

“Leave it to us.”

But the one that says:

“We will help you become more capable.”

That is a radically different relationship.

And institutions that fail to recognise this shift may increasingly appear paternalistic, extractive, or obsolete.

From Delegation to Agency

At the Academy of Life Planning, we increasingly believe society is moving through a civilisational transition:

From delegation toward agency.

From opaque systems toward transparent ecosystems.

From institutional dependency toward capability-supported autonomy.

This does not mean individuals become isolated.

In fact, the opposite may be true.

As complexity rises, people may need:

- ecosystems,

- collaborative communities,

- trusted guides,

- AI-supported tools,

- human-centred education,

- and ethical support structures

more than ever.

But the relationship changes fundamentally.

The individual remains the primary agent.

The ecosystem exists to strengthen capability — not replace it.

This is why the future may increasingly belong not to institutions that control people…

…but to institutions that help restore human agency.

Survive or Die

This may sound dramatic.

But history is full of institutions that failed to adapt when structural conditions changed.

The industrial era rewarded scale, centralisation, and informational control.

The AI era may increasingly reward:

- transparency,

- adaptability,

- empowerment,

- ecosystem thinking,

- and trustworthiness.

Institutions now face a simple but profound question:

Will you participate in the restoration of human agency?

Or will you resist it?

Because the public increasingly has alternatives.

And once individuals begin experiencing agency directly, dependency becomes much harder to sell.

That is the real significance of AI.

Not simply automation.

But the redistribution of cognitive power itself.

The next decade may determine which institutions evolve into trusted ecosystem participants…

…and which become relics of a fading age of institutional dependency.

The choice may ultimately be very simple:

Restore agency.

Or risk extinction.

Richard Edelman frames the 2026 Trust Barometer around a new trust crisis: insularity. He describes a progression from polarisation, to grievance, to insularity — where people increasingly trust only those who share their values, information sources and preferred solutions.

The consequences are serious. Insularity creates closed trust ecosystems, narrower worldviews, distrust as the default setting, less dialogue, more nationalism, and a shift from “we” to “me”. Edelman argues this makes it much harder to solve major problems such as reskilling, affordable housing, AI adoption and geopolitical cooperation.

Several drivers are identified: people switching off from news, economic anxiety, inflation, tariffs, fear of AI-related job loss, collapsing optimism about the next generation, and eroding institutional trust. Edelman says 70% of people believe leaders are liars, including CEOs, government leaders and media leaders.

AI is treated as a major accelerant. Arvind Krishna argues AI will change jobs but not necessarily reduce total employment, while Dan Schulman is more blunt: AI, quantum and robotics together may produce serious job displacement within 5–10 years. He warns that business leaders must be honest about this and stop pretending reskilling is an easy answer.

The proposed response is trust brokering. Edelman defines this as helping alienated groups bridge differences, surface common interests and “disagree better”. Employers are seen as especially important because “my employer” is more trusted than business generally, and the workplace can provide structured spaces for civil dialogue.

The panel also stresses that institutions must align words with actions. Multinationals can no longer extract value and leave; they must show long-term local contribution. Leaders need to be transparent, admit uncertainty, support employees through change, and create psychologically safe spaces for difficult conversations.

The strongest AoLP-relevant insight is this: insularity is ultimately a loss-of-agency response. People retreat into closed circles when they feel systems are too big, too opaque, too fast-moving, or too untrustworthy.

That links directly to our thesis:

AI does not simply threaten institutions.

It gives individuals new capability.

Institutions now face a choice: help restore agency, or be seen as part of the problem.

The closing message was cautiously optimistic: trust can be rebuilt, but not through slogans. Edelman’s line was: “Trust drives growth, but action earns trust.”