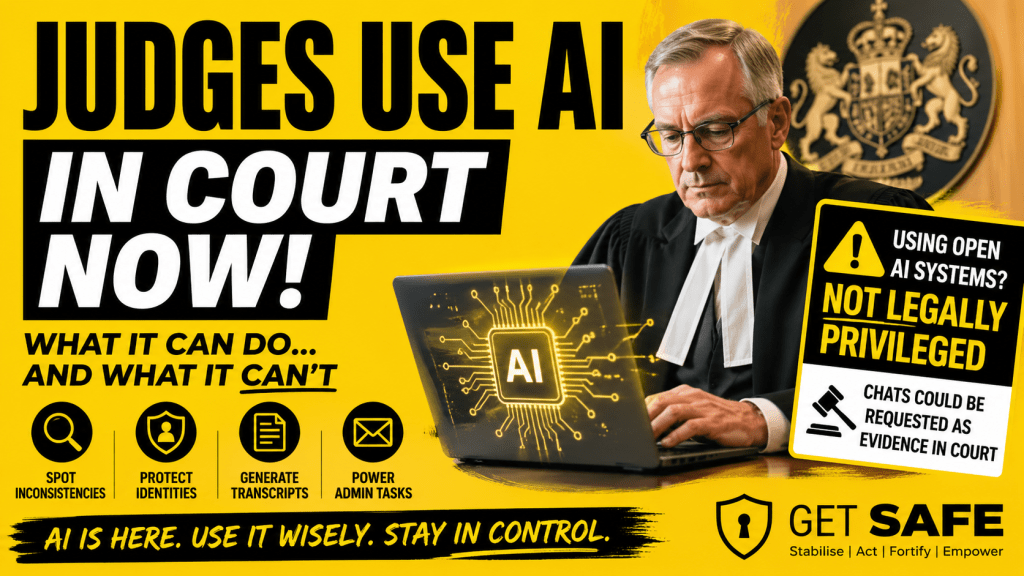

There’s been a quiet but important shift in the UK courts.

Senior judges are now openly using artificial intelligence as part of their day-to-day work. Not to decide cases—but to improve clarity, reduce error, and increase efficiency.

That matters. Because it shows where AI is genuinely useful—and where the boundaries still sit.

The judiciary has started using AI—carefully

Sir Colin Birss, a senior judge in England and Wales, recently outlined how AI is already being used inside the courts.

Not for judgment. Not for legal reasoning.

But for support tasks that improve quality and consistency.

Here are the key uses:

1. Checking for inconsistencies

Once a judgment is written, AI can review it and highlight:

- contradictions

- unclear wording

- internal inconsistencies

Judges remain fully responsible—but the tool acts as a second pair of eyes.

2. Protecting identities

AI is used to help anonymise judgments by spotting details that could lead to:

- indirect identification

- “jigsaw identification” when multiple details are combined

This is about protecting people, not replacing human judgement.

3. Generating transcripts

AI can produce transcripts from hearings more quickly and accurately—something that could significantly improve access to justice over time.

4. Handling administrative tasks

Searching emails, retrieving documents, and organising information—AI is already transforming the back-office workload of the courts.

The key boundary: humans stay accountable

The most important point isn’t what AI can do.

It’s what judges are not doing with it.

- They are not outsourcing decisions

- They are not relying on AI as authority

- They remain fully accountable for every judgment

AI is being used to support thinking—not replace it

That’s the model.

So where does this leave you?

If you’re dealing with a complaint, dispute, or potential legal action, AI can be incredibly helpful.

It can help you:

- organise your timeline

- structure your evidence

- draft communications

- clarify your thinking

In many cases, it allows people to present their situation more clearly than ever before.

But there’s something important you need to understand.

AI is not legally protected

If you speak to a solicitor, your conversations are protected by law.

This is called legal professional privilege.

It means those communications:

- are confidential

- cannot normally be demanded or used in court

But this protection does not apply when you use public AI systems.

What that means in practice

If you use open AI tools:

- your conversations are not legally privileged

- what you write may form part of your document trail

- in a formal dispute, relevant material could be requested as evidence

This doesn’t mean anyone is watching your chats.

It means that if your case reaches a legal stage, what you’ve created may be treated like any other document.

Don’t panic—just use AI wisely

This is not a reason to avoid AI.

It’s a reason to use it properly.

Here’s how to stay safe:

Stick to facts

Focus on what happened, when, and who was involved.

Avoid speculation

Don’t guess motives or make assumptions in formal communications.

Use AI to think—not to vent

You can explore ideas freely—but be deliberate about what you turn into formal documents.

Separate drafting from sending

Use AI to prepare. Then review carefully before sharing anything externally.

Keep control of your narrative

You are building a clear, consistent record over time—not trying to win in one message.

The bigger picture

AI is changing the balance of power.

It’s giving individuals the ability to:

- structure complex situations

- communicate more clearly

- challenge institutions more effectively

Even the courts are embracing it—for the right reasons.

But with that power comes responsibility.

The Get SAFE perspective

At Get SAFE, we don’t tell you to avoid AI.

We help you use it safely and effectively.

- To stabilise your thinking

- To structure your case

- To support your next steps

Without creating unnecessary risk.

Final thought

The courts have found their balance:

Use AI to improve clarity.

Keep humans responsible.

You can do the same.

Use AI to strengthen your position.

Stay in control of what you say, and how you say it.

If you’re working through a situation and need help getting started,

Goliathon and The Leveller are designed to give you that clarity—step by step, at your pace.