Last summer, the UK Supreme Court confirmed something many in the industry had long argued for:

Credit brokers do not owe a fiduciary duty to their customers.

For some, that was a legal clarification.

For others, it was a line in the sand.

Because stripped back to its essence, the ruling reinforced a simple reality:

The industry operates on caveat emptor — buyer beware.

Not “we will act in your best interests.”

But “you are responsible for your own decisions.”

The Industry Got What It Asked For

For years, parts of the financial services sector resisted the idea of heightened duty.

Why?

Because fiduciary responsibility:

- Constrains commercial behaviour

- Limits conflicts of interest

- Requires full alignment with the customer

By contrast, a non-fiduciary model allows:

- Sales-driven incentives

- Variable pricing

- Commercial flexibility

In short:

It grants the industry full agency.

And the Supreme Court decision confirmed that position.

But Something Has Changed

What the ruling did not account for is what has happened next.

AI.

In 2026, the balance of power is shifting — not through regulation, but through capability.

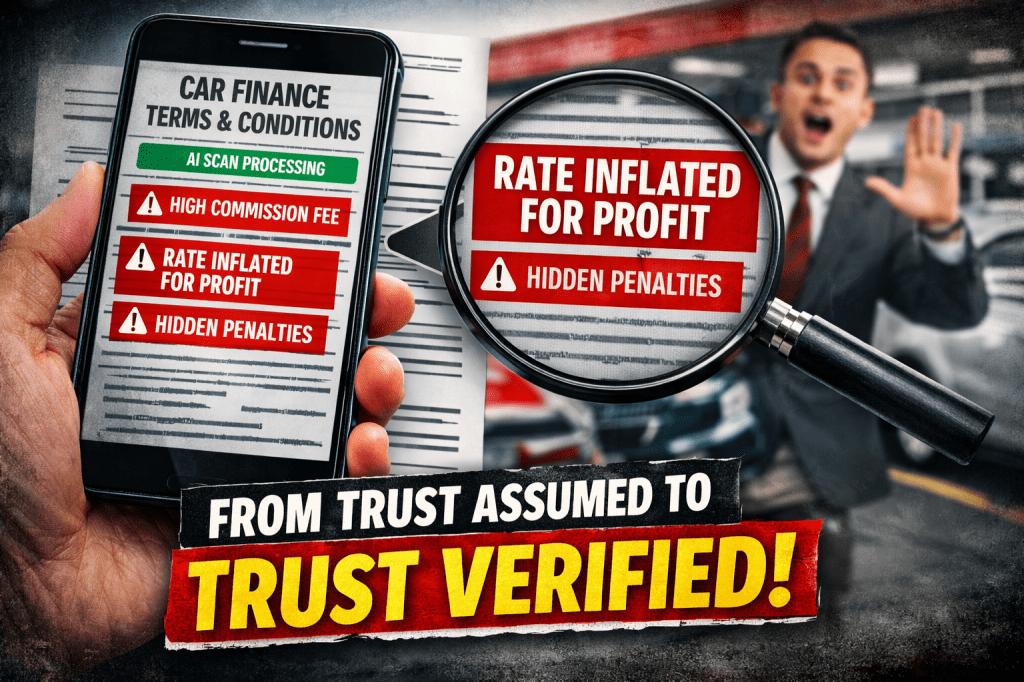

Today, a consumer can:

- Take a photo of terms and conditions

- Paste them into an AI tool

- Ask: “What am I not being told?”

- Receive a clear, structured answer in seconds

Not hours. Not days. Seconds.

From Passive Consumer to Active Challenger

This is not a marginal improvement.

It is a structural shift.

Historically:

- Contracts were long, dense, and technical

- Disclosure was often buried

- Understanding required time, expertise, or both

The system, whether intentionally or not, relied on:

information asymmetry

Now, that asymmetry is collapsing.

Because AI can:

- Translate legal language into plain English

- Surface hidden incentives

- Identify conflicts of interest

- Highlight unusual or unfavourable terms

And crucially:

It does this at the point of decision.

The Moment of Truth

Imagine the interaction.

A customer is offered finance.

They pause.

They scan the agreement.

They ask their AI assistant:

“Is this deal fair?”

And within seconds, they are told:

- How the rate compares

- Whether incentives may be influencing it

- What the risks are

- What questions to ask

At that moment, something fundamental happens:

The customer is no longer dependent.

They are informed.

They are empowered.

They can challenge.

And if trust is broken:

They can walk away.

Trust, Repriced

This is where the real shift lies.

For decades, the industry has relied — implicitly — on:

- Speed of transaction

- Assumed trust

- Limited scrutiny

AI changes all three.

Now:

- Scrutiny is instant

- Questions are informed

- Trust must be earned in real time

Which leads to an unavoidable conclusion:

In a world of AI-enabled consumers, opaque pricing becomes commercially fragile.

A Word on Resistance

There is a growing narrative in some quarters that consumers should be cautious about using AI in financial decisions.

Caution is sensible.

Discouragement is not.

Because we must ask:

Who benefits if consumers remain dependent, uncertain, or uninformed?

AI does not remove responsibility from the individual.

It enhances it.

It gives people the tools to:

- Understand

- Question

- Decide

To argue that consumers should not use such tools — particularly when entering complex financial agreements — raises important questions about intent.

The Restoration of Human Agency

This is the deeper story.

The Supreme Court ruling reinforced a system where:

The burden of understanding sits with the individual.

AI makes that burden manageable.

It restores something that had been eroded over time:

Human agency

Not theoretical agency.

Not legal agency.

But practical, usable, real-world agency.

What This Means for the Future

The implications are significant.

For consumers:

- Greater confidence

- Better decision-making

- Reduced vulnerability to poor outcomes

For firms:

- A need for genuine transparency

- Pricing that can withstand scrutiny

- Conversations that hold up under challenge

And for the system as a whole:

A shift from trust assumed to trust verified

Closing Reflection

The industry asked for a model where the customer is responsible.

That model has now met a new reality.

A customer equipped with AI is no longer:

- Passive

- Dependent

- Or easily steered

They are:

- Informed

- Capable

- And increasingly unwilling to accept what they don’t understand

Human agency has not been granted by regulation.

It has been restored by technology.

And those who seek to limit that restoration — particularly at the point where individuals are making financial decisions — deserve careful scrutiny.

Because in the end, this is not about AI.

It is about power.

And for the first time in a long time, that power is moving back toward the individual.